rdb alternatives and similar packages

Based on the "Database" category.

Alternatively, view rdb alternatives based on common mentions on social networks and blogs.

-

tidb

TiDB is an open-source, cloud-native, distributed, MySQL-Compatible database for elastic scale and real-time analytics. Try AI-powered Chat2Query free at : https://tidbcloud.com/free-trial -

cockroach

CockroachDB - the open source, cloud-native distributed SQL database. -

Milvus

A cloud-native vector database, storage for next generation AI applications -

vitess

Vitess is a database clustering system for horizontal scaling of MySQL. -

TinyGo

Go compiler for small places. Microcontrollers, WebAssembly (WASM/WASI), and command-line tools. Based on LLVM. -

groupcache

groupcache is a caching and cache-filling library, intended as a replacement for memcached in many cases. -

VictoriaMetrics

VictoriaMetrics: fast, cost-effective monitoring solution and time series database -

bytebase

The GitLab/GitHub for database DevOps. World's most advanced database DevOps and CI/CD for Developer, DBA and Platform Engineering teams. -

go-cache

An in-memory key:value store/cache (similar to Memcached) library for Go, suitable for single-machine applications. -

immudb

immudb - immutable database based on zero trust, SQL/Key-Value/Document model, tamperproof, data change history -

rosedb

Lightweight, fast and reliable key/value storage engine based on Bitcask. -

buntdb

BuntDB is an embeddable, in-memory key/value database for Go with custom indexing and geospatial support -

pREST

PostgreSQL ➕ REST, low-code, simplify and accelerate development, ⚡ instant, realtime, high-performance on any Postgres application, existing or new -

xo

Command line tool to generate idiomatic Go code for SQL databases supporting PostgreSQL, MySQL, SQLite, Oracle, and Microsoft SQL Server -

tiedot

A rudimentary implementation of a basic document (NoSQL) database in Go -

nutsdb

A simple, fast, embeddable, persistent key/value store written in pure Go. It supports fully serializable transactions and many data structures such as list, set, sorted set. -

LinDB

LinDB is a scalable, high performance, high availability distributed time series database. -

cache2go

Concurrency-safe Go caching library with expiration capabilities and access counters -

GCache

An in-memory cache library for golang. It supports multiple eviction policies: LRU, LFU, ARC -

gocraft/dbr (database records)

Additions to Go's database/sql for super fast performance and convenience. -

lotusdb

Most advanced key-value database written in Go, extremely fast, compatible with LSM tree and B+ tree. -

fastcache

Fast thread-safe inmemory cache for big number of entries in Go. Minimizes GC overhead

InfluxDB - Power Real-Time Data Analytics at Scale

Do you think we are missing an alternative of rdb or a related project?

Popular Comparisons

README

This is a golang implemented Redis RDB parser for secondary development and memory analysis.

It provides abilities to:

- Generate memory report for rdb file

- Convert RDB files to JSON

- Convert RDB files to Redis Serialization Protocol (or AOF file)

- Find the biggest N keys in RDB files

- Draw FlameGraph to analysis which kind of keys occupied most memory

- Customize data usage

- Generate RDB file

Support RDB version: 1 <= version <= 10(Redis 7.0)

If you read Chinese, you could find a thorough introduction to the RDB file format here: Golang 实现 Redis(11): RDB 文件格式

Thanks sripathikrishnan for his redis-rdb-tools

Install

If you have installed go on your compute, just simply use:

go install github.com/hdt3213/rdb@latest

Or, you can download executable binary file from releases and put its path to PATH environment.

use rdb command in terminal, you can see it's manual

This is a tool to parse Redis' RDB files

Options:

-c command, including: json/memory/aof/bigkey/flamegraph

-o output file path

-n number of result, using in

-port listen port for flame graph web service

-sep separator for flamegraph, rdb will separate key by it, default value is ":".

supporting multi separators: -sep sep1 -sep sep2

-regex using regex expression filter keys

Examples:

parameters between '[' and ']' is optional

1. convert rdb to json

rdb -c json -o dump.json dump.rdb

2. generate memory report

rdb -c memory -o memory.csv dump.rdb

3. convert to aof file

rdb -c aof -o dump.aof dump.rdb

4. get largest keys

rdb -c bigkey [-o dump.aof] [-n 10] dump.rdb

5. draw flamegraph

rdb -c flamegraph [-port 16379] [-sep :] dump.rdb

Convert to Json

Usage:

rdb -c json -o <output_path> <source_path>

example:

rdb -c json -o intset_16.json cases/intset_16.rdb

You can get some rdb examples in cases

The examples for json result:

[

{"db":0,"key":"hash","size":64,"type":"hash","hash":{"ca32mbn2k3tp41iu":"ca32mbn2k3tp41iu","mddbhxnzsbklyp8c":"mddbhxnzsbklyp8c"}},

{"db":0,"key":"string","size":10,"type":"string","value":"aaaaaaa"},

{"db":0,"key":"expiration","expiration":"2022-02-18T06:15:29.18+08:00","size":8,"type":"string","value":"zxcvb"},

{"db":0,"key":"list","expiration":"2022-02-18T06:15:29.18+08:00","size":66,"type":"list","values":["7fbn7xhcnu","lmproj6c2e","e5lom29act","yy3ux925do"]},

{"db":0,"key":"zset","expiration":"2022-02-18T06:15:29.18+08:00","size":57,"type":"zset","entries":[{"member":"zn4ejjo4ths63irg","score":1},{"member":"1ik4jifkg6olxf5n","score":2}]},

{"db":0,"key":"set","expiration":"2022-02-18T06:15:29.18+08:00","size":39,"type":"set","members":["2hzm5rnmkmwb3zqd","tdje6bk22c6ddlrw"]}

]

Generate Memory Report

RDB uses rdb encoded size to estimate redis memory usage.

rdb -c memory -o <output_path> <source_path>

Example:

rdb -c memory -o mem.csv cases/memory.rdb

The examples for csv result:

database,key,type,size,size_readable,element_count

0,hash,hash,64,64B,2

0,s,string,10,10B,0

0,e,string,8,8B,0

0,list,list,66,66B,4

0,zset,zset,57,57B,2

0,large,string,2056,2K,0

0,set,set,39,39B,2

Find The Biggest Keys

RDB can find biggest N keys in file

rdb -c bigkey -n <result_number> <source_path>

Example:

rdb -c bigkey -n 5 cases/memory.rdb

The examples for csv result:

database,key,type,size,size_readable,element_count

0,large,string,2056,2K,0

0,list,list,66,66B,4

0,hash,hash,64,64B,2

0,zset,zset,57,57B,2

0,set,set,39,39B,2

Convert to AOF

Usage:

rdb -c aof -o <output_path> <source_path>

Example:

rdb -c aof -o mem.aof cases/memory.rdb

The examples for aof result:

*3

$3

SET

$1

s

$7

aaaaaaa

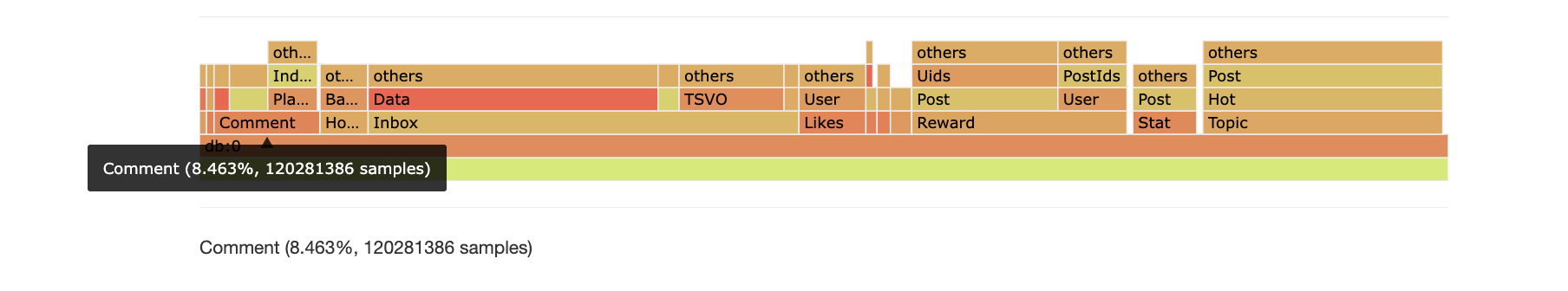

Flame Graph

In many cases there is not a few very large key but lots of small keys that occupied most memory.

RDB tool could separate keys by the given delimeters, then aggregate keys with same prefix.

Finally RDB tool presents the result as flame graph, with which you could find out which kind of keys consumed most memory.

In this example, the keys of pattern Comment:* use 8.463% memory.

Usage:

rdb -c flamegraph [-port <port>] [-sep <separator1>] [-sep <separator2>] <source_path>

Example:

rdb -c flamegraph -port 16379 -sep : dump.rdb

Regex Filter

RDB tool supports using regex expression to filter keys.

Example:

rdb -c json -o regex.json -regex '^l.*' cases/memory.rdb

Customize data usage

package main

import (

"github.com/hdt3213/rdb/parser"

"os"

)

func main() {

rdbFile, err := os.Open("dump.rdb")

if err != nil {

panic("open dump.rdb failed")

}

defer func() {

_ = rdbFile.Close()

}()

decoder := parser.NewDecoder(rdbFile)

err = decoder.Parse(func(o parser.RedisObject) bool {

switch o.GetType() {

case parser.StringType:

str := o.(*parser.StringObject)

println(str.Key, str.Value)

case parser.ListType:

list := o.(*parser.ListObject)

println(list.Key, list.Values)

case parser.HashType:

hash := o.(*parser.HashObject)

println(hash.Key, hash.Hash)

case parser.ZSetType:

zset := o.(*parser.ZSetObject)

println(zset.Key, zset.Entries)

}

// return true to continue, return false to stop the iteration

return true

})

if err != nil {

panic(err)

}

}

Generate RDB file

This library can generate RDB file:

package main

import (

"github.com/hdt3213/rdb/encoder"

"github.com/hdt3213/rdb/model"

"os"

"time"

)

func main() {

rdbFile, err := os.Create("dump.rdb")

if err != nil {

panic(err)

}

defer rdbFile.Close()

enc := encoder.NewEncoder(rdbFile)

err = enc.WriteHeader()

if err != nil {

panic(err)

}

auxMap := map[string]string{

"redis-ver": "4.0.6",

"redis-bits": "64",

"aof-preamble": "0",

}

for k, v := range auxMap {

err = enc.WriteAux(k, v)

if err != nil {

panic(err)

}

}

err = enc.WriteDBHeader(0, 5, 1)

if err != nil {

panic(err)

}

expirationMs := uint64(time.Now().Add(time.Hour*8).Unix() * 1000)

err = enc.WriteStringObject("hello", []byte("world"), encoder.WithTTL(expirationMs))

if err != nil {

panic(err)

}

err = enc.WriteListObject("list", [][]byte{

[]byte("123"),

[]byte("abc"),

[]byte("la la la"),

})

if err != nil {

panic(err)

}

err = enc.WriteSetObject("set", [][]byte{

[]byte("123"),

[]byte("abc"),

[]byte("la la la"),

})

if err != nil {

panic(err)

}

err = enc.WriteHashMapObject("list", map[string][]byte{

"1": []byte("123"),

"a": []byte("abc"),

"la": []byte("la la la"),

})

if err != nil {

panic(err)

}

err = enc.WriteZSetObject("list", []*model.ZSetEntry{

{

Score: 1.234,

Member: "a",

},

{

Score: 2.71828,

Member: "b",

},

})

if err != nil {

panic(err)

}

err = enc.WriteEnd()

if err != nil {

panic(err)

}

}

Benchmark

Tested on MacBook Pro (16-inch, 2019) 2.6 GHz 6cores Intel Core i7, using a 1.3 GB RDB file encoded with v9 format from Redis 5.0 in production environment.

| usage | elapsed | speed |

|---|---|---|

| ToJson | 144.11s | 9.23MB/s |

| Memory | 18.585s | 71.62MB/s |

| AOF | 104.77s | 12.76MB/s |

| Top10 | 14.8s | 89.95MB/s |

| FlameGraph | 49.38s | 26.96MB/s |

*Note that all licence references and agreements mentioned in the rdb README section above

are relevant to that project's source code only.